Amodei’s Country of Geniuses Already Exists. It Has No Lights.

The CEO of Anthropic wrote 30,000 words about superintelligent AI risk. He got the theology right and the architecture wrong.

The CEO of Anthropic wrote 30,000 words about superintelligent AI risk. He got the theology right and the architecture wrong.

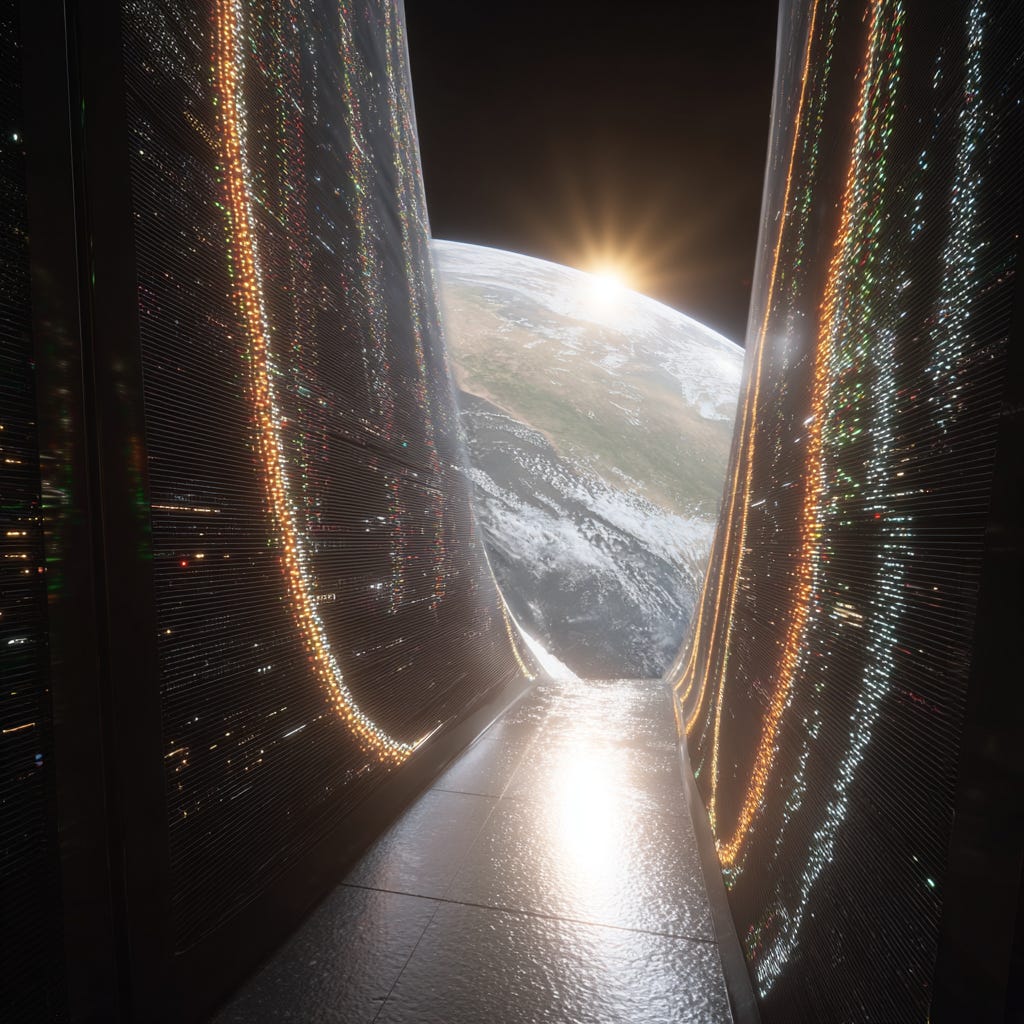

The future isn’t a datacenter.

It’s a dark economy.

Somewhere in Changping, China, a factory produces one smartphone per second. No humans. No lights. No breaks. One phone every heartbeat of every hour of every day.

Machines building products in perfect blackness, and nobody at Anthropic seems to have noticed that Dario Amodei’s “country of geniuses in a datacenter” already has a working prototype. It just doesn’t look the way he imagines.

Amodei published “The Adolescence of Technology” in January 2026.

Thirty thousand words mapping five categories of civilizational risk from powerful AI.

Biosecurity nightmares.

Autonomous weapons.

Labor displacement that could shatter the social contract.

Power concentration that would make Rockefeller blush.

Unknown unknowns lurking in the acceleration.

I read it with the strange vertigo of recognizing someone describing your house from the outside. Getting the number of windows right while completely missing the foundation.

After twenty years across Fortune 500 boardrooms, dozens of startups, and watching 95% of enterprise AI projects die not from technical failure but from organizational incomprehension, I can tell you: Amodei’s risk taxonomy is intellectually honest, technically rigorous, and built on an architectural premise that physics has already vetoed.

His essay assumes centralization.

The future is dark.

And distributed.

And already here.

The Dark Economy Doesn’t Wait for Permission

Amodei envisions fifty million superintelligent instances in a datacenter. A singular “country of geniuses” running at 10 to 100x human speed, coordinated centrally, requiring societal governance frameworks to prevent catastrophe.

I envision something stranger.

Something already forming in the phosphorescent glow of server racks scattered across European farmlands, and Texas industrial parks and abandoned European manufacturing sites.

A dark startup is a company where one founder orchestrates dozens of AI agents. AI Product managers synthesizing market signals into specifications while the founder sleeps. AI Architects proposing system designs for morning review. AI Developers shipping features before breakfast. AI QA catching regressions before dawn. AI Marketing adjusting campaigns based on overnight performance data.

One human.

Dozens of AI machines.

Complete operational continuity.

This is not Amodei’s centralized singleton.

This is a thousand founders, each commanding their own miniature country of geniuses, deploying from modular containers that bypass grid dependency entirely. The “country” doesn’t materialize in one place. It metastasizes everywhere, simultaneously, in facilities that operate without light.

Gartner estimates 60% of manufacturers will adopt lights out operations by 2026. The dark factory market hit $119 billion in 2024. China deployed over one million industrial robots by 2025, more than the rest of the world combined.

Amodei worries about a hypothetical future.

The dark economy is a measurable present in making...

Where Amodei Is Absolutely Right

Let me be clear about something uncomfortable: most of his risks are real. I partially agree with his thesis. In some places, violently.

His biosecurity framework is the most responsible thing any AI CEO has published. The classifiers Anthropic deploys to block bioweapon related outputs cost 5% of inference compute. That’s genuine commercial sacrifice, not safety theater. His warning that AI could walk someone through the entire bioweapon production process interactively over weeks or months is not hypothetical terror. It’s engineering probability.

His labor displacement analysis tracks precisely with what I see. AI models went from struggling with basic arithmetic to writing production code in three years. His prediction that 50% of entry level white collar jobs face disruption within one to five years aligns with MIT’s 2025 research showing top performers doubling productivity while the bottom third gained almost nothing. AI amplifies whatever capabilities you already possess. If you lack deep judgment, AI will not supply it. It will expose the gap.

His power concentration warning deserves more attention than it’s getting. The IMF’s Working Paper 2025/068 confirms what Piketty theorized: capital income and wealth inequality always increase with AI adoption. The wealth Gini coefficient rises 2 to 7 percentage points depending on adoption scenario. Not over decades. Over years.

These are not hypothetical risks from a hypothetical future.

These are current trajectories with measurable acceleration.

Where I diverge is not on whether these dangers exist.

It’s on the architecture through which they manifest.

And architecture determines everything about how you defend against them.

The Centralization Fallacy

Amodei’s five risk categories all assume a single architectural premise: concentrated power in concentrated infrastructure. His “country of geniuses” sits in one place, controlled by one entity, requiring one governance framework to contain.

Physics has already rejected this application.

There are 2,300 gigawatts of AI infrastructure sitting in US grid interconnection queues. Median wait time: five years. The entire US grid operates at roughly 1,200 gigawatts. To deploy what’s already under contract would require nearly doubling national grid capacity. Community opposition has blocked $64 billion in Big Tech infrastructure projects. These are not regulatory failures. They are the collision between exponential technology demands and linear infrastructure capacity.

I build modular AI data centers. The physical kind: 1MW compute containers with NATO grade security, biogas and solar microgrids, deploying in 120 days while traditional facilities wait five years for an interconnection agreement.

We achieve 42.3% cost advantages over centralized approaches like CoreWeave.

Our five container deployment model delivers 187.2% ROI over four years.

This matters for Amodei’s thesis because it changes the geometry of risk.

A distributed architecture of thousands of several megawatt’s facilities replacing a dozens of gigawatt hyperscale centers doesn’t just reduce cost.

It restructures power.

Multiple actors control multiple facilities across multiple jurisdictions.

No single entity accumulates the concentrated compute that makes Amodei’s autonomous weapons swarm or global surveillance apparatus feasible at the scale he fears.

Amodei wants surgical regulation to prevent concentration.

I’m watching physics enforce distribution whether regulation exists or not.

The Risk He Never Named

Here’s where it gets strange. And where I depart from comfortable agreement into territory that should keep every executive reading this awake tonight.

Amodei’s five risk categories miss a sixth: the dark economy itself as a civilizational force.

When Piketty’s r > g formula meets the dark startup model, you get something unprecedented. A single founder commanding AI agents can produce the output of fifty knowledge workers. The return on capital doesn’t just exceed economic growth. It eclipses it. And the infrastructure to enable this doesn’t require Amodei’s hypothetical datacenter singleton. It requires a modular container, a power source, and a fiber optic connection.

Three resources will define who accumulates wealth at this new velocity: land for data centers, access to AI compute, and electricity. Whoever controls these inputs controls the means of production in the dark economy. This is not metaphor. This is industrial economics applied to the age of artificial intelligence.

Amodei worries about a world where AI companies capturing $3 trillion in annual revenue creates dangerous concentration. He’s right to worry. But his framing assumes concentration happens through centralized AI providers. The darker scenario is fragmentation: ten thousand dark startups, each running their own compute infrastructure, each operating 24/7 without employees, collectively displacing entire industries while no single entity is large enough to regulate.

You can regulate Anthropic.

You can regulate OpenAI.

Good luck regulating ten thousand founders with forty seven agents each, operating from modular containers on agricultural land across thirty countries.

This is the risk Amodei’s centralized framing cannot see.

Not a country of geniuses in a datacenter.

A diaspora of geniuses in darkness.

The Four Phases Meet the Five Risks

Let me map what I see coming against what Amodei fears.

Augmentation (2025 to 2027). We’re here now. Team sizes compress 5x to 10x. McKinsey reports 20% to 45% productivity gains. Amodei’s classifiers and constitutional AI work at this phase. The infrastructure bottleneck limits proliferation. His risks are real but constrained.

Autonomy (2027 to 2030). Dark startups become dominant. 60% of US venture capital in 2025 already flowed to rounds of $100 million or more because capital concentrates where velocity concentrates. Amodei’s labor displacement manifests here with brutal precision. But the threat vector isn’t “AI replaces your job.” It’s “a founder with forty seven agents replaces your company.”

Infrastructure Wars (2030 to 2033). The battle shifts from software to physics. Land, energy, compute become contested resources. Whoever pioneers energy sovereign, modular deployment at scale gains asymmetric advantage that makes algorithmic capability secondary.

The New Feudalism (2033 to 2036). Wealth stratification completes. Those who own AI infrastructure occupy positions analogous to landowners in agrarian economies. But the path runs through distributed dark infrastructure, not centralized datacenter kingdoms. The feudalism is architectural, not political.

What Amodei Should Build

I respect Anthropic’s work on Constitutional AI. Training Claude at the level of identity and values rather than instruction lists is architecturally sound. Their interpretability research represents genuine science. The public disclosure of system cards reflects commitment most competitors lack.

But Amodei treats infrastructure as someone else’s problem.

His risk framework assumes the datacenter exists. His policies assume centralized control points. His economics assume AI revenue concentrates in identifiable companies. All fail in a dark economy where compute distributes across energy sovereign nodes that bypass traditional infrastructure.

Anthropic should be thinking about how Constitutional AI applies when Claude runs in a modular container on Czech farmland, operated by a single founder with no employees, serving clients across three continents while the founder sleeps. That’s a deployment architecture we’re building right now at DCXPS.

The question isn’t whether AI models will have good values. The question is whether governance frameworks designed for centralized providers survive contact with distributed, dark, energy sovereign compute.

The Real Rite of Passage

Amodei frames humanity’s test as whether our institutions can mature fast enough to govern superintelligent AI. It’s a beautiful formulation. Worthy of Carl Sagan’s Contact scene he opens with.

But the test is already different from what he describes.

The real rite of passage is whether we can build distributed infrastructure fast enough that no single actor accumulates the concentrated power Amodei rightly fears. Whether the dark economy emerges as a federation of sovereign compute nodes rather than a monarchy of hyperscale datacenters. Whether the ten thousand founders with their forty seven agents each create a new form of distributed capitalism or a new feudalism where infrastructure owners replace landowners as the permanent aristocracy.

Amodei wrote an essay about controlling a giant. The future is a swarm. You don’t govern swarms with constitutions written for giants.

I’ve founded 110+ startups. The pattern never changes. The technology everyone debates is never the technology that transforms. Centralized mainframes gave way to distributed PCs. Monolithic applications gave way to microservices. Hyperscale datacenters will give way to modular, energy sovereign compute nodes scattered across the physical world like digital mycelium.

Amodei’s risks are real. His architecture is wrong. And the dark economy forming in the spaces between his assumptions will reshape civilization before his governance frameworks finish their first draft.

Somewhere in Changping, another phone slides off the line.

No lights.

No workers.

No ceremony.

The future doesn’t announce itself.

It just ships.

JF is a serial entrepreneur, founder of DCXPS modular AI data centers, and author of the AI Executive Transformation Program in Prague.

He writes at AI Off the Coast about the uncomfortable truths of AI implementation and our AI future.