Why Elon Musk Is Importing Power Plants and OpenAI Is Lobbying for Electricity — And What It Means for Every Infrastructure Bet You Make in 2026

Elon Musk said it at Davos. OpenAI wrote it to the White House. The constraint on AI isn’t RAM or GPU scarcity.

At Davos in January, Elon Musk made a prediction that received far less attention than it deserved.

More chips will be produced than can ever be turned on. Not because demand is soft. Because there is not enough power to run them.

Shortly after, OpenAI wrote directly to the White House warning of what it called an “electron gap” threatening US AI leadership. Its message was blunt:

“Electrons are the new oil.”

The two most aggressive AI operators on earth are not worried about models or chips.

They are worried about electricity.

That single fact should reframe how every investor, operator, and executive thinks about where value accumulates in the AI transition.

I have built, invested in, or exited more than 110 startups across B2B technology, enterprise software, and infrastructure. I write this newsletter because I cannot find the analysis I actually need anywhere else.

And right now I am building DCXPS — deployable AI compute infrastructure — inside the exact constraint this article describes. I am not observing this from the outside. I am operating inside it.

The Numbers Are Not Subtle

1,000 TWh — global data center electricity consumption by end of 2026. That is Japan’s entire annual electricity output. For AI. (IEA)

80 GW → 150 GW — US data center power demand, 2025 to 2028. Nearly doubled in three years. (Bloom Energy, January 2026)

24 GW → 100 GW — US capacity needed by 2030. A fourfold expansion. In four years. (Wood Mackenzie, May 2026)

160 weeks — current lead time for substation transformers. Three years. To get the electrical equipment needed to connect a building to the grid.

$400B+ — spent by five technology companies on AI infrastructure in 2025 alone. Up 75% projected for 2026. (IEA)

$2.4 trillion — the addressable grid infrastructure opportunity through 2030, according to Quanta Services CEO Duke Austin.

The grid was not built for this. And it cannot be rebuilt quickly.

Three Reasons The Current Architecture Fails

1. The density is wrong.

Hyperscale facilities were designed for racks drawing 8 to 17 kilowatts. Current AI racks already exceed 50 kilowatts. Nvidia’s Blackwell architecture targets 120. The next generation targets 200.

You cannot retrofit a facility built for 17-kilowatt racks to serve 120-kilowatt workloads. You would need to rebuild it from the floor up.

2. The timelines are wrong.

A hyperscale data center takes four to seven years from site selection to operational. AI hardware generations cycle every 12 to 18 months.

By the time the facility opens, the workload it was designed for has evolved two generations beyond what it can support.

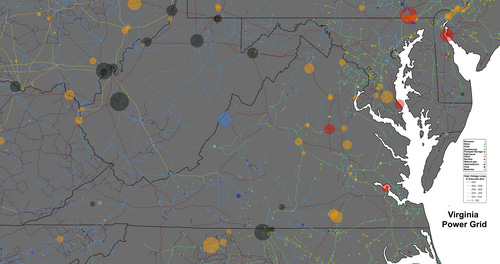

3. The geography is wrong.

Northern Virginia — the largest data center market in the world — has a grid so saturated that its dominant utility publicly acknowledged it could not meet demand despite adding over three gigawatts of capacity.

New grid connections in saturated markets: measured in years. Not months.

This Pattern Has Played Out Before

The internet created extraordinary wealth — but not for the websites. For the fiber. The routers. The CDNs.

Mobile created extraordinary wealth — not for the apps. For the spectrum. The towers. The backhaul.

In every major technology transition, the infrastructure layer captures the structural economics. The application layer captures the headlines.

AI is not different. It is following the same pattern at greater speed and greater physical intensity than any transition before it.

What The Opportunity Actually Looks Like

The operators building modular, energy-first infrastructure — facilities designed around power availability rather than real estate convention, deployable in months rather than years, engineered for the rack densities AI actually requires — are building a position that grid-dependent hyperscale construction cannot replicate on any timeline that matters.

The constraint is not the chip. It is not the model. It is not the talent.

It is the electron.

And whoever solves that first — at the right density, at the right speed, at the right location — captures the structural economics of the next decade.

Musk saw it. OpenAI saw it.

The question is whether you do too.

Jiří Fiala is the founder of DCXPS and writes AI of the Coast, a weekly newsletter on AI infrastructure economics. aiofthecoast.dcxps.com

What do you think the scenario will be in 5 years?