At Last: The Infrastructure Checklist That Tells You Whether Your AI Deployment Has a Power Problem Before You Sign Anything

Capacity market clearing prices for 2026–2027 hit $329.17 per megawatt-day. The year before: $28.92. A 1,037% increase. In a single year.

Picture this:

Your board approved the AI infrastructure budget in January.

Your CTO signed the co-location agreement in March. The vendor promised power availability by Q3. It is now May and the utility just sent a letter.

The interconnection study will take another 14 months. Construction on the substation upgrade cannot begin until the transformer arrives. Current transformer lead times: 160 weeks.

Your AI deployment is not delayed by a competitor. It is not delayed by a model limitation or a talent gap. It is delayed by an electrical component that takes three years to manufacture and nobody ordered in time.

AI of the Coast covers the one constraint underneath all of AI that almost nobody in the mainstream conversation is tracking: where the power comes from, and who controls it.

This is the conversation happening in infrastructure decision rooms across the US right now. Not in theory. This week.

The Number That Should Have Stopped Everyone

In the spring of 2024, the largest electricity grid in North America held its annual capacity auction.

Capacity market clearing prices for the 2026–2027 delivery year hit $329.17 per megawatt-day. The year before: $28.92.

A 1,037% increase. In a single year. In a market stable for over a decade.

The grid operator cited data center growth as a major contributing factor.

Nobody treated it as the warning it was. Out of approximately 12 GW of US data center capacity announced for 2026, only around 5 GW is currently under active construction. The rest — billions of dollars of planned infrastructure — sits stalled. Not by a lack of capital. By a lack of electrons.

What Is Actually Happening Right Now

Companies are hitting an AI operations wall as projects scale from pilots to production. Technology leaders are facing an AI operational bottleneck — struggling to scale from isolated pilots to enterprise-wide implementations. In 2026, some AI workloads will not reach production at all.

Not because the models are not ready.

Because the power is not there.

Data center companies are looking for spare power capacity at every electrical utility they can find — including very non-traditional utilities and cooperatives whose doors have never been knocked on before. That is not a sign of a market finding creative solutions. That is a sign of a market running out of obvious ones.

Interconnection wait times have more than doubled over the past fifteen years. Projects now spend an average of five years in queue before reaching commercial operation. And the queue has a compounding problem: when one project withdraws or significantly changes its specifications, utilities must restudy remaining projects in the queue. This cascading effect means that even well-prepared developers can face years of additional delay through no fault of their own.

You can do everything right and still get caught in someone else’s problem.

The Three Conversations Your Peers Are Having

The co-location customer who just found out their facility cannot deliver.

They signed a contract. They budgeted for Q3 power availability. The facility operator just disclosed that the utility interconnection study is running 18 months behind schedule. The substation upgrade is contingent on transformer delivery. Transformers currently have lead times exceeding 160 weeks — up from 140 weeks in 2023. The operator is apologetic. The SLA does not cover force majeure grid delays. The legal team is now involved.

The AI workload that was supposed to go live in September is looking at mid-2028 at the earliest.

The enterprise that built its AI roadmap around cloud capacity that is not available.

High-end GPU access is becoming uneven and unpredictable — and expensive at exactly the moment when demand is exploding. While some organisations can lock in capacity years in advance, others are left refreshing dashboards and watching quotas tighten, forced to adjust their plans based on whatever compute happens to be available during the month or quarter.

The 2026 AI roadmap assumed on-demand compute availability. That assumption is now structurally incorrect in the markets that matter most.

The infrastructure team that discovered “powered land” after the fact.

For real estate developers and data center operators, securing powered land — sites with direct access to robust natural gas infrastructure — and deploying flexible gas-fired generation are no longer just options. They are strategic necessities. The teams figuring this out now are six to eighteen months behind the teams who figured it out in 2024. That gap compounds.

What the Smart Operators Did Differently

They asked the power question first. Before the site. Before the vendor. Before the contract.

Many of the facilities navigating this environment successfully rely on a mix of local grid power and independent sources — nuclear, renewable, and on-site generation. The mix is not ideological. It is operational. Whatever delivers firm power fastest, without a multi-year interconnection queue, wins the deployment race.

Cleanview’s February 2026 report projects 30% of new data center energy capacity will come from on-site generation — up from effectively zero two years ago. Forecast to reach 50% as the constraint tightens.

The model: co-locate compute with dedicated power generation. Build or bring the power source to the facility. Bypass the interconnection queue entirely. The AI data center of 2027 will generate its own electricity the same way a 19th-century factory ran its own steam engine.

Not because it wants to.

Because the grid left it no other option.

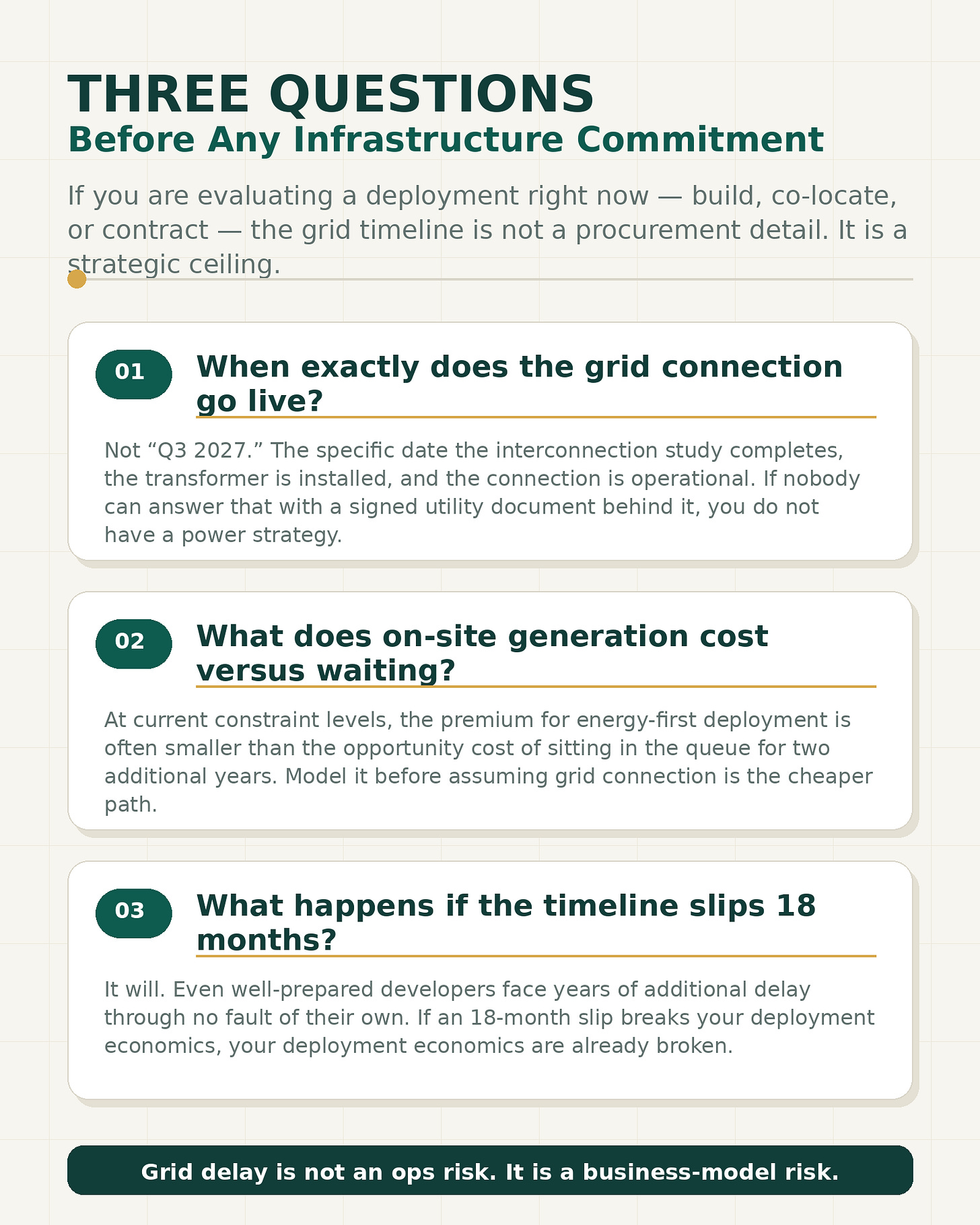

Three Questions Before Any Infrastructure Commitment

If you are evaluating a deployment right now — build, co-locate, or contract — the grid timeline is not a procurement detail. It is a strategic ceiling.

When exactly does the grid connection go live? Not “Q3 2027.” The specific date the interconnection study completes, the transformer is installed, and the connection is operational. If nobody can answer that with a signed utility document behind it, you do not have a power strategy.

What does on-site generation cost versus waiting? At current constraint levels the premium for energy-first deployment is often smaller than the opportunity cost of sitting in the queue for two additional years. Model it before assuming grid connection is the cheaper path.

What happens if the timeline slips 18 months? It will. Even well-prepared developers face years of additional delay through no fault of their own. If an 18-month slip breaks your deployment economics, your deployment economics are already broken.

The Bottom Line

Nearly half of all US data centers planned for 2026 have been canceled or delayed.

Not because AI demand softened. Not because capital dried up. Because the grid cannot deliver the power on any timeline that matches the speed of AI deployment.

The operators building durable positions this decade are the ones treating grid access as a permanent constraint to route around — not a temporary problem to wait out.

The queue is not clearing.

Build accordingly.

The DCXPS Thesis in One Paragraph: AI compute becomes a metered utility within five years. The infrastructure to deliver that utility must be modular, deployable at the speed of demand, co-located with energy sources rather than constrained by grid interconnection queues, and designed for rack densities that existing hyperscale facilities cannot physically support. The window to build that infrastructure is now. The window to invest in it is also now.

Jiří "Skzites" Fiala is a serial entrepreneur with 110+ startups built, invested in, or exited across B2B technology, enterprise software, and financial services. He is the founder of DCXPS, which builds deployable AI compute infrastructure for a grid-constrained world. AI of the Coast is the newsletter he writes because he cannot find the analysis he actually needs anywhere else — so he builds it himself. If that is the analysis you are looking for, you are in the right place.